A few years ago, under the name bad_control, I released a breakbeat track called usu tympanum 03 on my label, Interocitor Records. It’s been sitting in my library ever since. This week, on a whim, I uploaded it to Claude and asked for a music video.

Not a storyboard. Not a mood board. A finished, rendered, two-minute audio-synced video.

The brief was roughly: “Early 90s rave aesthetic. Chris Cunningham meets Aphex Twin. Really glitchy. Amiga demo scene mixed with early 90s hacker visuals.” If you’ve spent any time around the demoscene or remember what pirate BBS screens looked like, you know the vibe I was after.

What came back was genuinely surprising. Watch the full video here.

The Pipeline

Claude’s approach was more methodical than I expected. It started by analysing the audio file — running FFT spectral decomposition across the full track to extract per-frame data at 30fps. That’s 3,686 frames, each tagged with normalised values for bass energy, mid-range, high frequency content, ultra-high, spectral centroid, zero-crossing rate, and a binary onset detection flag.

The onset detection is the important bit. That’s what tells the system “a beat just hit.” It found 553 onsets across the two-minute track. Those would go on to drive mode transitions, glitch intensity, text flashes, and shape scaling.

With the analysis done, Claude wrote a complete Python renderer from scratch using Pillow and numpy. No GPU. No shader pipeline. No video editing software. Just Python generating PNG frames one at a time in a cloud container, then FFmpeg stitching them together with the original audio track.

Total wall time from first prompt to finished MP4: roughly ten minutes, including the conversation.

Version One: The Uniform Visualiser

The first version was a single continuous visualiser. It had all the right ingredients — phosphor green oscilloscope waveform, rotating wireframe geometry, hex dumps scrolling down the left edge, a spectrum analyser at the bottom, matrix rain, copper bars. Everything was audio-reactive. Bass drove the tunnel effect depth. High frequencies modulated the scanline intensity. Onsets triggered glitch displacement and RGB channel splits.

It looked decent. But two minutes of the same visual language gets old fast, no matter how reactive it is. The geometry in the centre — a triangle, a square, a hexagon — felt weak. The hacker text was too small to read. And while it was a visualiser, it was one visualiser. Monotone.

The Feedback

I gave one round of notes. More variety. Bigger text. Proper 90s 3D rendering instead of those flat polygons. And the big one: can it cycle through completely different visual modes, like a VJ switching between banks, with glitch transitions between them?

Claude scrapped the entire renderer and rebuilt it from scratch.

Version Two: Eight Modes

The new version has eight distinct visual modes, each with its own personality:

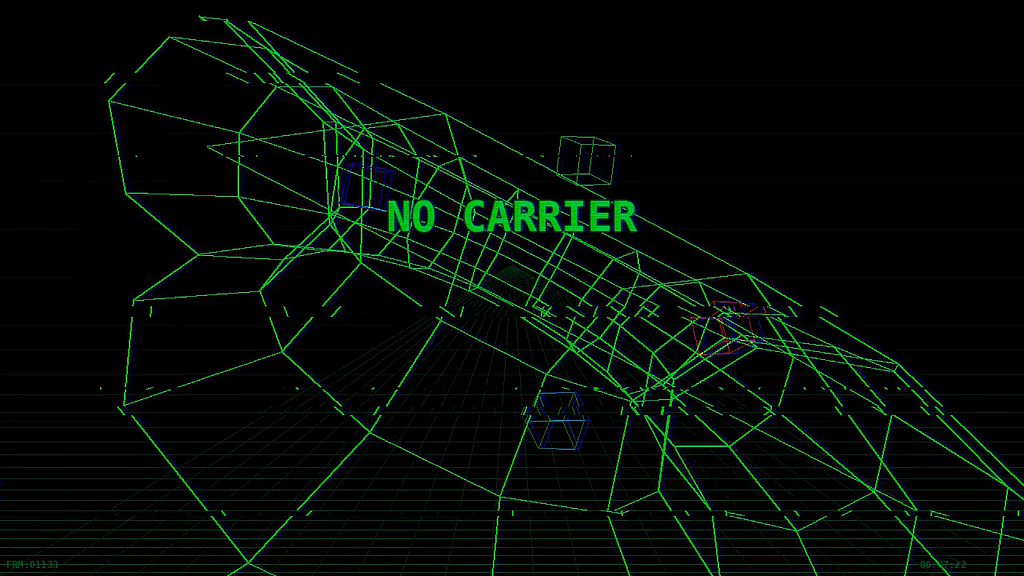

Wireframe Museum — rotating 3D torus, icosahedron, and octahedron with a Tron-style perspective grid floor. Smaller cubes orbit the central shape. All built with a hand-rolled 3D projection engine: vertices, edges, rotation matrices, perspective divide. No 3D library. Just maths.

Hacker Terminal — full-screen scrolling green phosphor text. Hex dumps, assembly code (MOV D0, $DFF000), modem connection strings, Amiga chipset references. Massive red warning text smashes across the screen on beat hits. There's a blinking cursor at the bottom and a fake decryption progress bar that tracks the bass energy.

Plasma Copper — classic Amiga demoscene. Plasma interference patterns rendered at quarter resolution and upscaled with nearest-neighbour sampling for that chunky pixel look, overlaid with rainbow copper bars scrolling in time with the music. If you ever sat in front of an A500 watching a Kefrens demo, this one’s for you.

Matrix Rain — dense katakana character columns with depth simulation and head/trail brightness. Rain speed is tied to bass energy. Big text punches through the rain on onsets.

Oscilloscope — green waveform driven by spectral centroid and RMS energy, with a Lissajous figure in a boxed display top-right, a 32-band spectrum analyser across the bottom, and live HUD readouts showing RMS, bass, frequency, and zero-crossing rate. This is the one that looks most like actual lab equipment.

Tron Tunnel — dual perspective grids (floor and ceiling) converging at a cyan horizon line, with wireframe shapes flying through the space. Speed lines burst from the centre on heavy bass. Purple and blue palette.

Glitch Typography — the screen fills with repeated word patterns as a background texture, while massive glitched words dominate the centre with multiple offset colour copies. Text changes on beat hits. Secondary hacker strings scroll underneath.

Starfield Warp — star trails with motion blur streaking from a central vanishing point. Wireframe shapes fly towards the camera. Radial speed lines fire on bass hits. A HUD reads “WARP FACTOR: 9.2” because why not.

The Transition System

The modes don’t just alternate on a timer. Claude pre-computes a mode schedule based on the audio analysis. It shuffles the mode order, cycles through all eight, and holds each for 4–12 seconds depending on the energy of the section. Transitions are triggered by strong beat onsets — when the onset strength passes a threshold and the current mode has been running for at least four seconds, it cuts.

The cut itself is heavy: big block displacement, colour channel separation, random coloured blocks scattered across the frame. It looks like the signal is being torn apart for a couple of frames, then the new mode snaps in clean. 25 transitions across the full track.

On top of all that, every frame gets post-processed: CRT scanlines (intensity modulated by high frequencies), RGB channel splitting (scaled to energy and onset strength), random micro-glitches at a 4% chance per frame, and occasional full-frame colour flashes on the biggest beats.

The Numbers

The output is 1280×720, 30fps, H.264 with AAC audio. About 70MB for two minutes and three seconds. 3,686 rendered frames across eight visual modes.

No plugins. No After Effects. No templates. No GPU. Python, Pillow, numpy, and FFmpeg in a cloud container.

Why I Think This Is Interesting

I’ve spent 30+ years making games. I helped create Croc at Argonaut. I’ve worked on Spyder, Warframe, Firebreak. These days, as Design Director at Sumo Digital, a significant part of my role is figuring out where AI fits into game development workflows.

This music video experiment isn’t about the video itself — it’s about what the process demonstrates. An AI took a specific creative brief with niche aesthetic references (Chris Cunningham, Amiga demoscene, 90s hacker culture), understood what those references implied visually, analysed source audio at the frequency level, wrote a complete procedural rendering system, took a round of creative feedback, and rebuilt the entire thing accordingly.

The creative feedback loop is the part that stuck with me. “The geometry is too weak, the text is too small, I want more variety” isn’t a technical specification. It’s the kind of note a creative director gives an artist. And the response — scrapping V1 entirely and designing a multi-mode system with beat-synced transitions — is the kind of response you’d want from a good one.

For game dev specifically, the applications are obvious: procedural VFX that respond to audio or game state, rapid visual style prototyping before committing to a shader pipeline, UI animation systems driven by data, cutscene pre-visualisation from text descriptions. We’re in throwaway prototype territory and it already looks like a VJ set.

The tools are only getting better.

Try It Yourself

If you have a Claude account, the barrier to entry is zero. Upload an audio file and describe what you want. The quality of the output scales with the specificity of your brief — “make it look cool” will give you something generic, but “early 90s rave meets Amiga demoscene with phosphor green copper bars and hacker terminal aesthetics” gives the system a very specific design space to work in.

Give it something weird. See what happens.

control — usu tympanum 03, released on_ Interocitor Records.

If you’re experimenting with AI in creative or game dev workflows, I’d love to hear what you’re finding. Drop a comment or reach out directly.