I am not a good investor. I want to get that out of the way early, because if you’re reading this hoping for stock tips, the best advice I can give you is to do the opposite of whatever I would do.

My personal investment track record includes buying into companies at their peak because “the chart looks like it’s going up,” holding through catastrophic drops because “it’ll come back,” and a general inability to sell anything before it’s too late. I have the financial instincts of a golden retriever chasing a ball into traffic.

So naturally, I decided to build an autonomous AI-powered trading system.

How This Actually Started

The opportunity landed when Trading212 opened up their API to paid accounts. If you’re not familiar, Trading212 is a UK-based trading platform — think commission-free stock trading with a clean interface. For years, if you wanted to do anything programmatic with it, you were out of luck. Then they quietly rolled out API access, and suddenly you could read positions, place orders, and manage a portfolio through code.

For someone who builds real-time systems for a living, that’s a very dangerous combination of “I could do something with this” and “I probably shouldn’t.” So evenings and weekends it was.

I should stress right now — and I’ll keep stressing it — that everything you’re about to read is a spare-time side project running on the demo account. Trading212 provides a practice mode with fake money, and that’s where this system lives. The whole thing runs on a £130 mini PC sitting in my living room. I’m reckless, but I’m not stupid. Well, I might be stupid, but at least I’m not financially stupid. Not anymore. Not since the incident we don’t talk about.

Enter Claude Code

Here’s where this gets interesting for anyone who works in software. The entire trading system was built collaboratively with Claude Code — Anthropic’s command-line coding tool. Think of it less like autocomplete and more like pair programming with a very patient, very fast colleague who never judges you for your fourth refactor of the same function.

I’m not a Python developer by trade — 30+ years of game development means C# and Game Engines aremy home turf. But the workflow with Claude Code settled into a rhythm quickly. I’d describe the architecture or feature I wanted, it would produce working implementations, and we’d iterate. I’d push back on design decisions, it would explain tradeoffs, and we’d land somewhere neither of us would have reached alone.

The result is a system that’s more sophisticated than I had any right to build in stolen evenings and weekends. Not because the AI did all the thinking — it didn’t — but because the velocity of development let me iterate on ideas I’d normally have abandoned as “too ambitious for a side project.”

The Architecture (Where It Gets Familiar)

About two weeks in, I had a realisation. The system I was building to trade stocks looked almost identical to the analytics systems I’ve spent years wishing existed for live games.

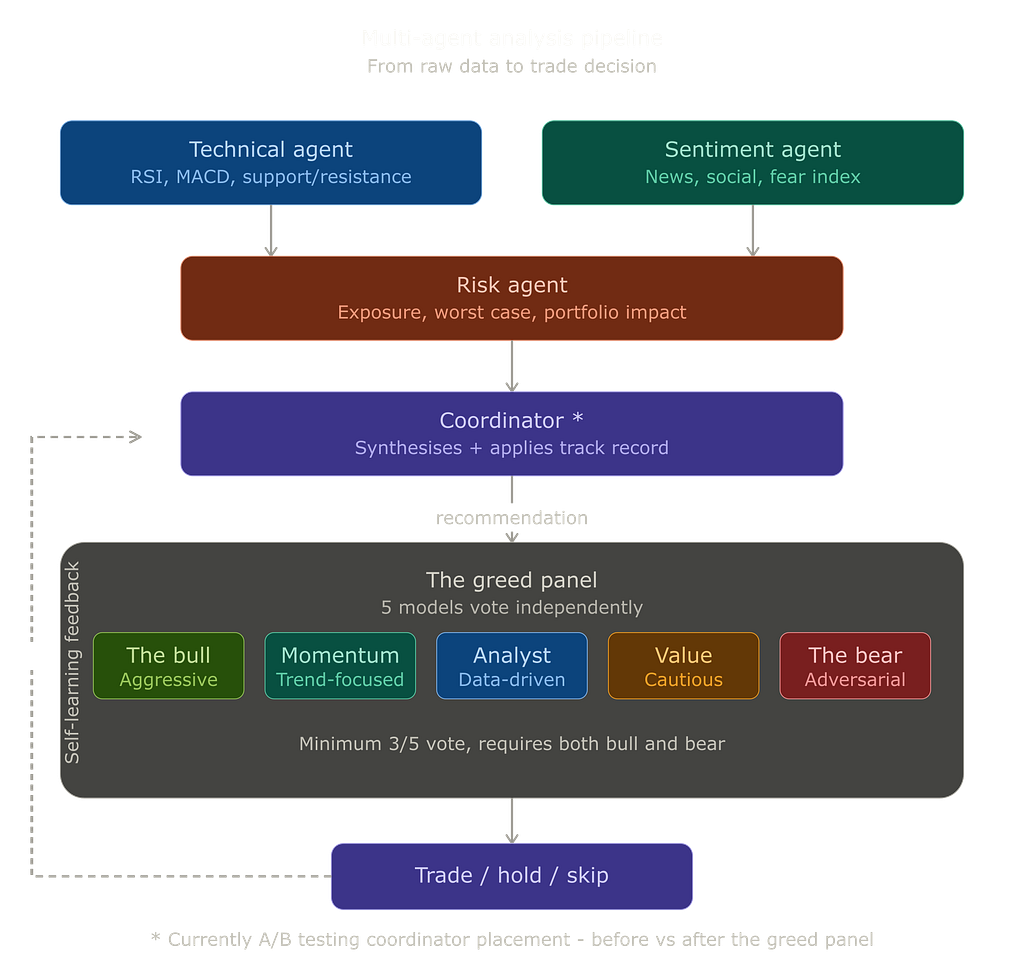

The core architecture uses four specialised AI agents running in concert:

A Technical Agent that crunches raw market data — price patterns, volume, momentum indicators. This is your telemetry pipeline. Numbers in, analysis out, no opinions attached.

A Sentiment Agent that reads the room — news, social media, market fear indicators. For anyone who’s shipped a live game, this is the thing you always wanted but never had: an automated system scraping Reddit, Discord, and Steam reviews to tell you how players feel about your last patch, not just what the data says they’re doing.

A Risk Agent that asks the awkward questions. How exposed are we? What’s the worst case? What does this decision do to everything else we’ve already committed to? This is your QA lead in AI form — the one who says “yes, the feature is cool, but have you stress-tested what happens when 10,000 players do it simultaneously?”

And a Coordinator that pulls it all together, weighs the evidence, and makes a recommendation. Critically, it also gets fed the system’s own track record — more on that in a moment.

If you’ve ever sat in a balance review meeting with a designer, a data analyst, a community manager, and a producer all arguing about whether to nerf the shotgun, you’ve experienced the human version of this pipeline. The difference is mine runs in seconds and doesn’t require booking a meeting room.

The Greed Panel

This is probably my favourite part of the system, and also the part that has the most direct application to game development.

After the four agents produce their recommendation, the system doesn’t just execute it. It convenes a panel of five different AI models — each with a deliberately different personality — and makes them vote.

I call it the Greed Panel.

Each model sits on a spectrum from optimistic to adversarial. There’s The Bull, who finds reasons to buy. The Momentum Chaser, who cares about trends. The Analyst, who just wants to see the numbers. The Value Investor, who thinks everything is too expensive. And The Bear, whose entire job is to find reasons this trade will fail.

They vote. 5/5 means high conviction. 3/5 means proceed with caution. 2/5 or less means proceed with caution, or walk away.

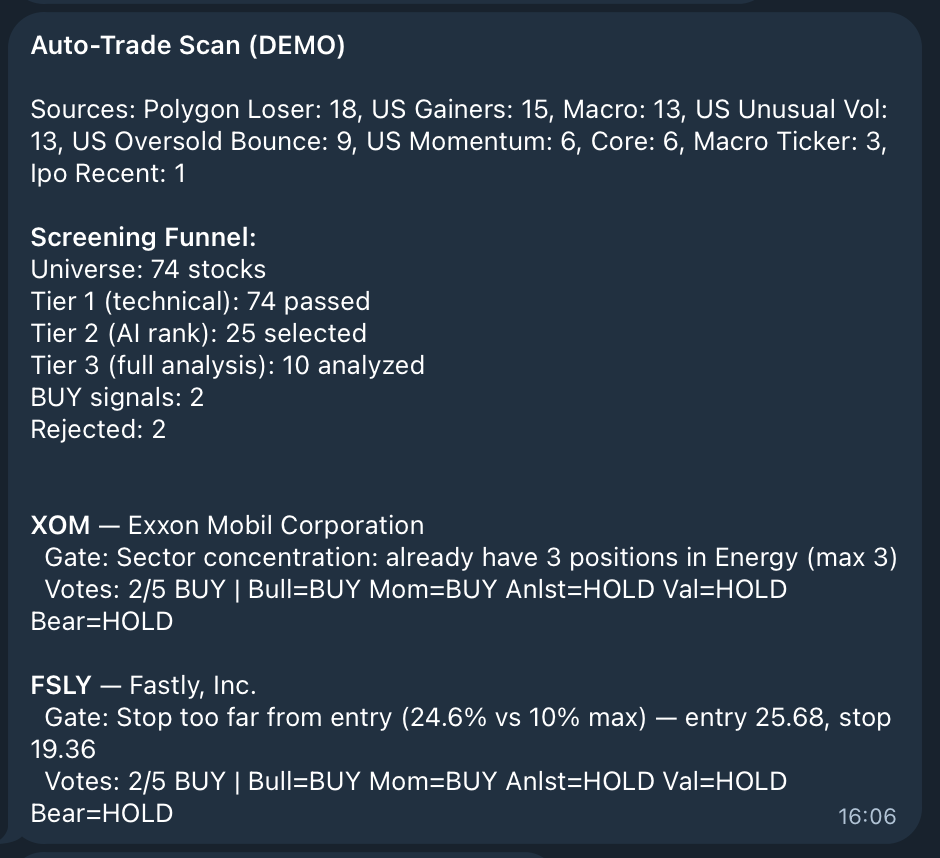

The panel has analysed over 1,200 stocks so far, and it rejects nearly two-thirds of what the analysis pipeline recommends. Which, given my personal track record of buying everything that looks shiny, is probably for the best.

Now replace “stocks” with “balance changes” and “buy/sell” with “ship/hold” and you’ve got something every game studio needs: an automated balance committee that runs in seconds, gives you a confidence score, and doesn’t get derailed by someone’s pet feature.

The Self-Learning Loop

This is where the game developer in me got genuinely excited, because it’s the system I’ve wanted to build for every live game I’ve ever worked on.

Every trade follows a closed feedback loop:

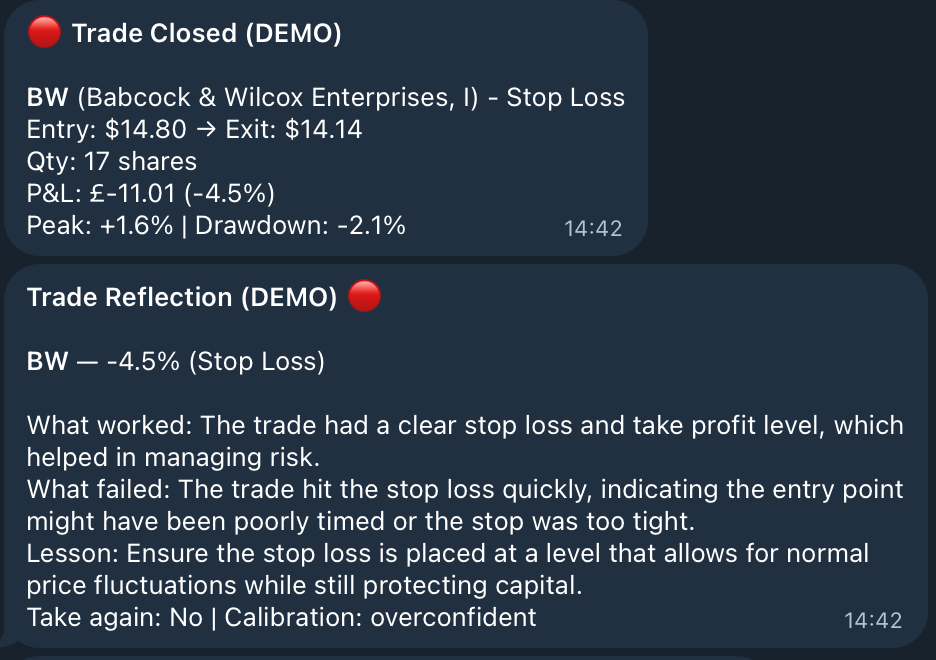

When a trade is placed, the system captures everything it knew at the time — scores, market conditions, conviction level, which models voted which way. It then monitors the position every 15 minutes, tracking the best it ever reached and the worst it ever got. When the trade closes, it sends the full lifecycle to an AI “coach” that produces a structured reflection — what worked, what failed, a one-sentence lesson, and whether it would take the same trade again.

Those reflections get deduplicated by theme and fed back into future decisions. The system literally tells the AI: “Here’s your track record. Here’s what you’ve learned. Now make the next call.”

It also generates mandatory rules from statistical evidence. Things like: “48% of stopped-out trades later recovered past the target — widen your stop losses” and “high conviction trades have only a 28% win rate — downgrade your confidence.” These rules are enforced. The AI can’t override them, no matter how bullish the panel gets.

Over 51 reflections so far, patterns have emerged. Stops are consistently too tight. Low-conviction trades underperform. And the system is measurably more selective now than it was three weeks ago.

Now imagine this running for a live game. Every balance patch captures its context — what data drove the decision, what the predicted impact was. The system monitors the affected metrics continuously after the patch ships. When the dust settles, it generates a reflection: “We nerfed the shotgun by 15%. Pick rate dropped from 45% to 22%, but average engagement distance shifted — players are camping more.” Those lessons feed into the next balance discussion. Over time, you build institutional memory that doesn’t walk out the door when someone leaves the team.

That’s worth building. That’s worth a demo account and $2 a day in API costs.

The Daily Rhythm

The bot runs 27 scheduled jobs across the trading day, and the structure maps directly onto live-ops.

Pre-market, it checks for new IPO listings and runs a morning brief — overnight moves, sector trends, sentiment. When markets open, it runs the full analysis pipeline on UK stocks, then cycles through macro-aware scans four times during the US session. Every 15 minutes, it checks every open position against live broker data, verifies stop losses are active, and tracks maximum favourable and adverse excursion.

End of day, it runs a retrospective: for every stock it analysed that day, fetch the closing price and ask — did we get the direction right? It even follows up at day 3 and day 5 to measure medium-term accuracy.

The expensive analysis only runs on the 10 most promising candidates, and even those use a mix of cheap and mid-tier models.

For a live game, the equivalent rhythm would be: overnight anomaly report, continuous meta health checks, live KPI monitoring every 15 minutes, and a daily retrospective asking “we flagged 8 issues today, 3 were real, 5 were noise — our hit rate is improving.” All running autonomously, reporting via Telegram or Slack, learning from its own accuracy.

Four Weeks of Learning the Hard Way

The numbers tell a story, and the story is mostly about how bad the first two weeks were.

Week 1: Five trades. The system barely worked. 437 passing unit tests hadn’t caught a single integration failure. The bot had never actually completed a trade end-to-end. Classic.

Week 2: 78 signals, 10 portfolio resets. This was the “break everything on purpose” phase. Force trades through every pathway. Every reset was a discovered bug — orders that didn’t cancel, stops that didn’t fire, positions that went stale. If you’ve ever shipped a game and watched the first 24 hours of live data pour in, you know this phase intimately.

Week 3: 121 signals, 1 reset. Stabilising. First profitable week — barely, at +$2.47. I briefly considered retiring from game development. The self-learning reflections started flagging “tight stops” as a recurring theme.

Week 4: 108 signals, 0 resets. The system was stable but the market wasn’t. A broad downturn hit and the system took losses, but it adapted — macro-aware defensive selling kicked in, protecting winners and cutting losers faster.

Week 5: 167 signals. Tightening. Raised the technical score floor, tuned defensive sell thresholds, added winner protection. On one particular Monday, our stock picks outperformed the S&P 500 with a 55.6% hit rate and +0.36% average move. On demo money. Let me be very clear about the demo money part.

Total P&L is still negative from those early weeks of stress-testing. But the trajectory is clear: each week, the system gets smarter, more selective, and better at protecting capital. Which is exactly what you want to see in a self-learning system — and exactly what you want to see in a live game’s analytics pipeline as it matures after launch.

Why I’m Writing This

I didn’t set out to build a blueprint for game analytics. I set out to build a trading bot because Trading212 opened the API, I had Claude Code on hand, and I have a well-documented inability to leave interesting technical problems alone.

But four weeks and 31,000 lines of Python later, I’ve accidentally built the most complete real-time analytics and decision-support architecture I’ve ever seen for a live product. Multi-agent analysis, consensus-based decision making, automated feedback loops, self-learning from outcomes, graduated confidence scoring, safety gates that prevent bad decisions from shipping — all running autonomously for $2 a day.

Every live game I’ve worked on would have benefited from something like this. Not the trading part, obviously. The part where you build the loop, make it faster, and let it learn from its own mistakes.

The trading bot might never make me any money. Given my track record, it almost certainly won’t. But the patterns it’s teaching me about real-time decision systems? Those are worth their weight in demo-account gold.

This is Part 1 of a series. Part 2 will dig into the specific patterns that transfer between financial systems and game analytics — including how trade lifecycle tracking maps to player progression curves, consensus panels for balance decisions, and the economics of running multi-agent AI systems at scale.

The code is private (it trades real money eventually, and I’d rather not give the market any more advantages over me than it already has), but the architectural patterns are universal. If you’re building live-ops systems for games, the trading world has solved a lot of these problems. And if you’re building trading systems — well, game developers have been doing real-time adaptive systems for decades.

If you’re working on something similar or want to compare notes, drop a comment or reach out.